Data Aggregation Best Practices

The Definition of Data Aggregation

Data aggregation is the process of gathering data from single or multiple sources to integrate it into a simplified outline. Specifically, data aggregation contains single data retrieval from numerous sources to compile it into a brief profile, such as sums or agreeable statistics.

Data aggregation is extremely beneficial to individuals who aim for data analysis since they can check a large quantity of data at a glance.

Data Aggregation Process

In general, an aggregation process includes the following three steps:

- Retrieve data from diverse sources: A data aggregator collects data from multiple sources, such as different databases, spreadsheets, and HTML documents.

- Filter and arrange the input data: The purpose of this procedure is to guarantee precise and consistent data before being aggregated. The collected data is selected and preprocessed so as to eliminate any inconsistencies, mistakes, or unavailable data.

- Combine and compile data: The processed data is combined into an independent dataset. The final procedure consists of attaching, tying, and condensing data into a significant and concise form. Making simplified views, figuring total statistics, or producing pivot tables are all completed in this process.

Actually, there are multiple aggregation skills and tools that enable you to aggregate data in different manners. Then, the aggregated data is deposited in a data warehouse for further analysis or is applied to make decisions for the business.

Data Aggregation Best Practices

After learning about how data aggregation works, it is important to understand data aggregation best practices before aggregating it.

1. Use Cases of Data Aggregation.

A. Economy: Data aggregation from several sources is used to evaluate the credibility of their clients so as to make a decision, such as whether to permit a loan or not. Plus, aggregated data is significant for researching and recognizing the stock market situation.

B. Medical Healthcare: Medical institutions leverage data aggregated from health tests, health records, and lab data to improve treatment and care decisions.

C. Marketing: On the one hand, data collected from company websites and social networks can be used to monitor references, hashtags, and interactions, where you can tell whether a marketing strategy has worked. On the other hand, sales and client data is aggregated for the following marketing activities.

D. Software Monitoring: Software assembles and aggregates application and network data at regular intervals to track application performance, find new errors, and solve problems.

E. Big Data: Data aggregation makes it easier to exploit the data available throughout the globe and save it in a data warehouse for further usage.

2. Challenges in Data Aggregation

A. Combine Diverse Types of Data.

Due to originating from different sources, it is possible for input data to own diverse formats. The data aggregator has to process, standardize, and transform the data before aggregating it, which is a complicated and tiring process. In this case, what matters before data aggregation is data parsing which is about converting original data into an easier-to-use format.

B. Ensure Privacy

Privacy is often the priority when processing data, data aggregation is out of exception. It is likely for you to leverage Personal Identifiable Information to generate an abstract on behalf of a team, such as when yielding the public outcomes of an election or a poll. Therefore, data aggregation is usually united with data anonymity. And failure to follow EU privacy regulations can contribute to legal questions and penalties.

C. Producing premium Results

Source data is the critical factor that affects the reliability of the results of a data aggregation process. So it is essential to guarantee that the collected data is intact, precise, and consistent.

3. Data Aggregation With Yiluproxy

As we mentioned above, a data aggregation process begins with retrieving data from diverse sources. A data aggregator can make use of formerly collected data or retrieve it straightly. Importantly, the results of aggregation will rely on the quality of that data, meaning that data collection plays a crucial role in data aggregation.

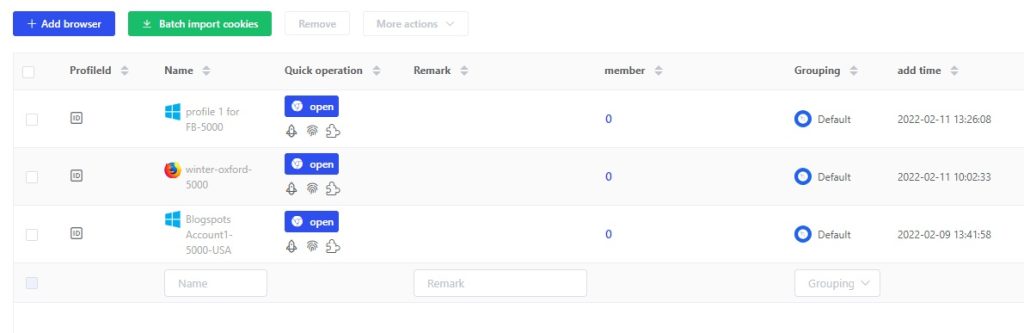

Luckily, YiluProxy makes data collection easier since you can use it to avoid website restrictions or IP blocks with its advanced proxy technology. Then you can aggregate required data effortlessly.

You can use these datasets in a lot of situations. For instance, this aggregated data help them compare prices with competitors, monitor the search habit as well as plans of customers for journeys, and forecast upcoming tourism currency.

get free trial

We Offer 3-Day Free Trial for All New Users

No Limitations in Features